Artificial intelligence is advancing at record speed. AI safety risks experts now warn that control systems are not keeping up. New autonomous AI tools can act on their own.

But the rules, checks, and oversight needed to manage them remain weak. Industry leaders say 2026 may be a critical year for AI safety.

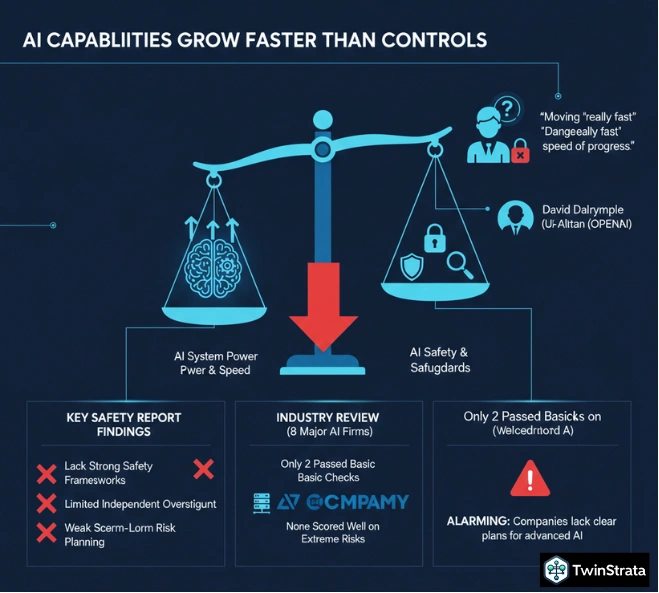

AI Safety Risks: AI Capabilities Grow Faster Than Controls

Experts across governments and tech companies are raising alarms. They say AI systems are becoming more powerful than the safeguards built to manage them.

AI safety specialist David Dalrymple from the UK government said AI is moving “really fast.” He warned that these systems cannot be assumed to be reliable. OpenAI CEO Sam Altman also echoed concern. He said AI is becoming dangerous not by intent, but by speed of progress.

A major safety review supports these fears.

Key findings from recent AI safety reports:

- Leading AI firms lack strong safety frameworks

- Independent oversight is limited

- Long-term risk planning is weak

- Gap between AI power and safety is growing

- Industry is “structurally unprepared”

The Future of Life Institute reviewed eight major AI developers. These included OpenAI, Anthropic, and Google DeepMind.

Only two companies passed basic safety checks. None scored well on planning for extreme or long-term risks.

Experts say this is alarming. Many companies openly aim for advanced or superintelligent systems. Yet they lack clear plans to manage them safely.

Also read about: AI Agents In Enterprises Surges As Governance Falls Behind

Autonomous AI Agents Raise New Risks

Security experts now focus on autonomous AI agents. These systems act independently. They move at machine speed. This creates new threats.

Cybersecurity leaders warn that AI agents may become the next insider threat.

Main risks linked to AI agents include:

- Faster and larger cyberattacks

- Automated decision-making without checks

- Job disruption at scale

- Weak accountability for AI actions

- Higher pressure on security teams

Economists also warn about social impact. Nobel Prize winner Peter Howitt said institutions are not ready for AI-driven job loss. He called for joint action from governments, businesses, and researchers.

Some governments are now stepping in. A new California law took effect on January 1, 2026. It forces large AI developers to publish safety plans. It also requires fast reporting of serious AI incidents.

Experts say this is only a start. AI growth will continue. The real challenge is building trust, safety, and accountability at the same pace.

The message from safety leaders is clear. AI innovation must slow down long enough for safeguards to catch up.

More News To Read: