New research from Apify and The Web Scraping Club confirms a counterintuitive trend: proxy budgets are increasing even though proxy prices have generally been falling.

Their State of Web Scraping report 2026, based on surveys of hundreds of scraping professionals, found that 65.8% of respondents used more proxies in 2025 than the year before, and 58.3% increased their total proxy spending.

Why Volume and Complexity Are Driving Proxy Costs Up Even as Per-GB Rates Drop

The explanation is straightforward — scraping operations are scaling and targets are becoming harder to reach, driving up total consumption even when per-unit costs are lower. r/webscraping has been discussing the report heavily since publication.

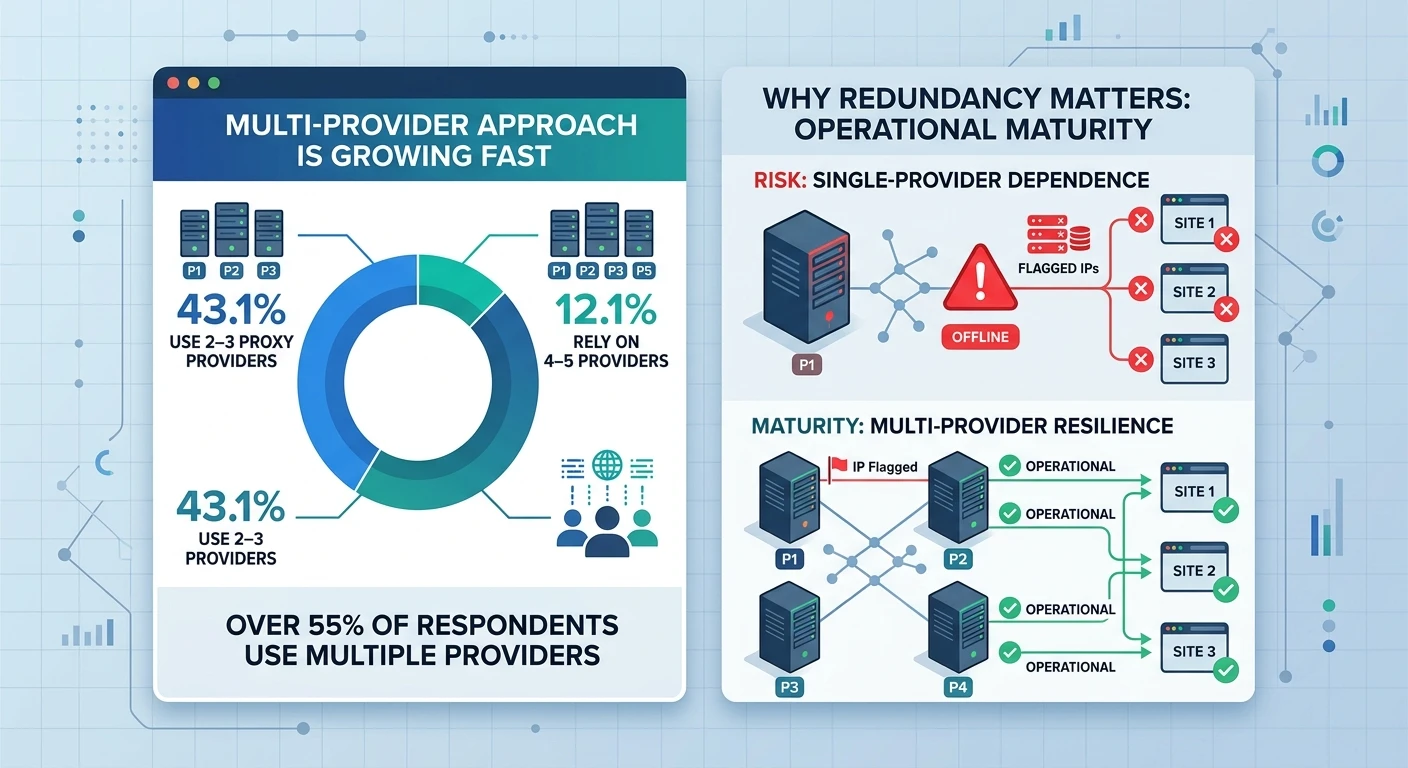

The multi-provider approach is also growing fast. 43.1% of survey respondents now use 2–3 proxy providers simultaneously, and 12.1% rely on 4–5 providers. This redundancy reflects operational maturity — single-provider dependence creates risk when a provider’s IPs get flagged on key target sites.

Professional scraping operations are treating proxy sourcing the same way SaaS teams treat cloud vendor relationships: diversified by design, with failover routing built in from day one.

What’s Driving Anti-Bot Escalation and What Scraping Teams Are Doing About It

Modern anti-bot systems no longer look at just IP addresses. They analyze behavioral patterns, device fingerprints, network characteristics, and timing signals simultaneously. Rotating user agents or switching IPs without addressing the other signals produces diminishing returns.

The scraping professionals now getting consistent results on high-protection targets — large e-commerce platforms, social media, major travel sites — are combining residential and mobile proxies with browser automation that mimics real human behavior at the session level.

AI-powered scraping is also accelerating proxy demand. AI data training requires continuous ingestion of fresh web content, and every LLM or image model in production creates ongoing scraping load.

Bright Data‘s pivot toward positioning itself as an “AI data infrastructure company” rather than a proxy provider is a strategic read on where enterprise proxy revenue is heading — not individual scraping projects but infrastructure contracts with AI labs and large-scale data platform companies.

Also Read